In a context where AI is increasingly being used to support writing and manuscript revision, many people have begun to pay attention to a very practical question: how can a piece of writing become more fluent and natural while still preserving scholarly seriousness, transparency, and the writer’s own voice?

From Coffee Talk #4, the discussion therefore did not stop at matters of expression alone. It also opened up broader reflection on how human beings might use such tools responsibly in academic settings.

When AI enters the scholarly writing desk, what writers worry about may not be words alone

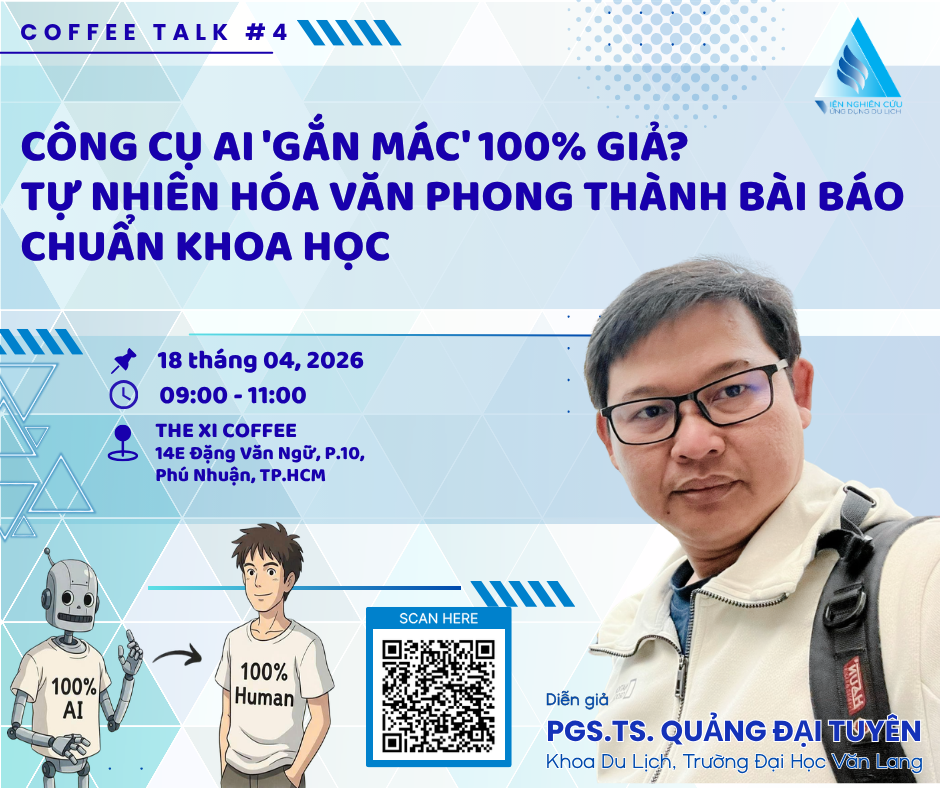

As part of the Coffee Talk academic café series, the fourth session raised an issue that feels quite close to contemporary research life: AI is gradually becoming present in the processes of drafting, revising, and refining manuscripts, yet alongside this convenience come many concerns about integrity, academic tone, and the writer’s responsibility toward their own text.

With the theme “AI tools flagged as 100% fake? Humanizing writing into a proper scholarly article”, the session did not seem to revolve only around how AI-detection tools work, or how to make a paragraph “look less machine-generated.” Rather, the discussion appeared to lean toward a deeper question: in a context where AI is becoming ever more accessible, what should writers do so that their manuscripts can still retain academic integrity, transparency, and the sense that behind the text there is indeed a thinking subject at work?

Perhaps this is also what makes the topic worth paying attention to. At the present moment, many people are no longer standing before the question of whether or not to use AI. Instead, they are facing a different question: how far should it be used, in what way, and after using it, what remains distinctly one’s own in the writing?

AI may help writing flow more smoothly, but it does not necessarily make it more mature

One of the more visible points from the Coffee Talk session was that AI can indeed be quite effective at the surface level of a text. It can help rearrange sentences to make them more concise, replace certain words with more “academic” ones, suggest clearer paragraph structures, and even make an English manuscript read more smoothly after only a few rounds of revision. For those who are under time pressure, or who do not yet feel fully confident in their expression, this is clearly a useful form of support.

Even so, that support also seems to come with a familiar risk: the writing may become smoother, yet at the same time lose the natural unevenness of genuine thinking. In other words, AI can make a text look “better” very quickly, but it does not necessarily strengthen the argument, nor does it necessarily make the writing carry more of the author’s own voice.

Perhaps that is why, in the discussion, the emphasis was not placed so much on whether AI may or may not be used, but rather on whether the writer remains in control of the manuscript. A manuscript supported by a tool at the level of phrasing may still be acceptable. But if the writer is almost entirely handing over the work of thinking, organizing ideas, inserting data, and even drawing conclusions to the tool, then the risk no longer lies merely in language, but in the very academic foundation of the piece.

Very small signs can sometimes be enough to make a text feel unnatural

A rather interesting part of the Coffee Talk session focused on details that writers might normally overlook. Some of these signs seem minor at first glance: the style of quotation marks, the use of long dashes, filler phrases that appear repeatedly, or sentence rhythms that are so even as to become overly polished. Any single detail may not attract much attention, but when they appear together across a long manuscript, they can easily produce a distinctly “mechanical” feeling.

Here, perhaps the more important issue is not whether a detection tool can identify such traces, but what those traces suggest: namely, that the text has not yet been edited deeply enough. A scholarly piece is often judged not only by whether its sentences are correct, but also by its rhythm, restraint, and the appropriateness of its language choices. When every sentence is balanced and every paragraph moves smoothly according to the same pattern, readers can easily feel that the text has been “generated” rather than truly “written.”

The session also seemed to suggest that many writers today, especially when using AI to polish academic English, may unintentionally allow their manuscripts to drift toward a style that feels overly old-fashioned, overly ornate, or too heavily invested in the appearance of scholarship. Yet contemporary academic writing in many fields appears to value clarity, directness, and restraint more highly. A sentence that is too long, too layered with subordinate clauses, or too full of filler phrases does not necessarily increase scholarly quality; sometimes it only makes the writing heavier.

Perhaps for this reason, instead of trying to “make the text sound human,” a more reasonable approach is to read it carefully again and ask whether the wording is truly serving the argument. If a sentence is unnecessary, cut it. If something can be said more briefly, say it more briefly. If a passage feels overstated, bring it down. Editing in this way not only makes the writing feel more natural, but also helps the writer return to an active role in shaping the manuscript.

What may be more worrying is AI’s tendency to “fill in the blanks” with information that is not real

If surface-level signs can still be revised relatively easily, the area of data and citations is perhaps where the risks become more serious. This, too, was addressed quite clearly in the discussion. AI often responds very quickly to user prompts, and in many cases it can produce a figure that sounds entirely plausible, a statistic that seems persuasive, or a reference that appears perfectly relevant but in fact does not exist or cannot be traced back to a source.

In academic research, this is perhaps not a problem to be taken lightly. An awkward sentence can be revised. A weak argument can be rewritten. But a datum without a source, an incorrect citation, or a fabricated reference can undermine the credibility of an entire manuscript. This is especially true in fields such as tourism, communication, education, or sociology, where writers often rely heavily on statistics, reports, and earlier literature. In such contexts, source verification is almost impossible to omit.

From the spirit of the Coffee Talk discussion, one important suggestion seems to emerge: if a source cannot be verified, it may be better to stop and acknowledge the limit of the available information, rather than retain a piece of data simply because it fits the argument neatly. For example, if official figures have not yet been released by a relevant authority, a writer can state this clearly. Then, if necessary, they may draw on a real survey, an earlier study, or a trustworthy report to support the point more modestly.

That way of writing may not make a paragraph feel instantly “perfect,” but it may reflect scholarly values more faithfully: accepting the limits of available data rather than filling gaps with artificial certainty.

A piece of writing that appears too “beautiful” may sometimes leave readers uneasy

Another notable point is that AI often tends to make writing excessively polished. The introduction is neat, the transitions are smooth, the conclusion is complete, and on the surface there seem to be no cracks at all. Yet this very seamlessness can sometimes make a text feel lacking in something quite important in research: a sense of caution.

In academic work, writers often have to accept that they cannot always speak too definitively. Data are limited. Context is limited. Methods are limited. Even the contribution of a study usually needs to be presented with a certain degree of restraint. Yet AI, because it is designed to “complete” responses, may sometimes push a manuscript in the opposite direction: making claims more strongly than necessary, concluding more quickly than is warranted, and making the research sound more novel than it may actually be.

This may be directly related to expressions such as “this is the first study,” “this is the only work,” or “this is an unprecedented finding.” In some very rare cases, such phrasing may indeed be accurate. But in most research contexts, especially at the level of journal articles, theses, or proposals, such absolute claims can easily sound exaggerated. More modest formulations, such as “one of the initial approaches,” “a preliminary opening in this context,” or “a contribution to the existing line of research,” may align better with a more restrained scholarly spirit.

It could be said that this kind of “imperfection” is not necessarily a weakness. In many cases, it is precisely caution, the refusal to overstate, and a measured way of presenting one’s work that make a piece of writing more trustworthy.

The hardest part may not be revising sentences, but preserving the writer’s voice

One rather thought-provoking observation from the session was that AI may produce grammatically correct, structurally sound, and even superficially logical writing, yet still fail to convey the writer’s own voice. In academic settings, voice is not merely a matter of style; it also reflects how the writer sees the issue, the degree of restraint in their claims, and their stance toward the object of study.

Many manuscripts that are overly shaped by AI begin to resemble one another. Whatever the topic, the writing may open with a broad general statement, proceed to list factors, and end with a familiar sentence announcing the research objective. Everything may sound correct, yet the text lacks the sense that it could only have been written in that particular context, by that particular person.

Perhaps for this reason, “humanizing” in an academic context should not be understood simply as a cosmetic operation. It might better be understood as the process of bringing a manuscript back closer to the real writer. This may mean replacing an overly formulaic introduction with an opening more grounded in the research context. It may mean trimming unnecessary filler sentences. It may mean replacing passive constructions with more clearly agentive phrasing such as “we observed,” “this study records,” or “from these initial observations, it may be seen that…”. It may also mean adding a few concrete, evocative details so that the writing feels less dry and less like a mass-produced template.

The discussion also touched on the possibility of bringing into writing experiences that feel closer to lived reality, such as sound, light, smell, or other concrete details of context. Perhaps this is not a matter of ornamentation, but a reminder that academic writing, especially in the social sciences and humanities, still needs to remain connected to the lifeworld. A concrete expression, used in the right place, can sometimes bring much more vitality to a sentence than a highly abstract but distant formulation.

AI may support a draft, but the final writing should still remain human work

One practical issue raised during the Coffee Talk session was the notion of “using AI to revise AI.” That is, when a paragraph generated by one tool still feels stiff, a writer might use another tool to vary the sentence rhythm, shorten the passage, or produce a more workable draft before revising it manually. From a technical perspective, this is perhaps not an uncommon experience today.

Even so, the overall spirit of the discussion did not seem to encourage dependence on a chain of tools. Rather, it emphasized that all such tools should remain at the level of draft and suggestion. The more important work still belongs to the writer: rereading with one’s own judgment, considering whether the argument truly holds, identifying where claims are too strong or too weak, checking whether the data are sourced, and asking whether the tone still feels like one’s own.

In other words, AI may help a text take shape more quickly, but it cannot replace the final intellectual labor. It is precisely at this stage of review and revision that the writer reclaims their place as the subject of the work: the one who chooses, omits, accepts limits, and takes responsibility.

A self-review checklist before submission may still be very necessary

At the end of the session, some suggestions were offered in the form of a gentle checklist for writers to use before submission. Perhaps this is not a fixed set of criteria, but at the very least it offers a set of questions that may help writers pause before sending off a manuscript.

Does the piece have enough variation in sentence rhythm, or does every paragraph move at the same steady pace? Are there sentences that are too long without adding meaningful value? Are there places where filler phrases are being used simply to connect ideas rather than truly clarify the argument? Are there passages that sound smooth but remain overly generic, and could therefore be replaced with more concrete phrasing?

In addition, does the piece include any useful guiding questions for the reader, or is it written entirely in a one-directional expository mode? Are there places where a detail from lived experience, a specific impression, or a more vivid illustration could help reduce dryness? Are passive constructions really necessary, or should they be turned into active ones in order to clarify the writer’s role? And most importantly, have all figures, author names, publication years, and references been checked against real sources?

These questions may sound simple, but they are perhaps quite useful. Sometimes what improves a manuscript is not a stronger tool, but simply a more careful rereading.

Transparency about the use of AI may be more worth considering than quietly concealing it

One notable concluding point of Coffee Talk #4 was the emphasis on transparency. Rather than seeing AI as something that must necessarily be hidden, the discussion seemed to suggest another perspective: in many academic contexts today, using AI to support language, phrasing, or fluency may gradually be becoming more acceptable, provided that the writer retains control over the content and bears full responsibility for the manuscript.

From this perspective, adding a brief note at the end of a manuscript, where appropriate under journal or institutional guidelines, may be something worth considering. The point of such a note is not to legitimize handing writing over to a machine, but to show that the writer understands the boundary between technical assistance and academic responsibility. What matters here is not merely whether a declaration is made, but whether the writer is genuinely honest about their process.

From Coffee Talk #4, perhaps what remains is not only a few technical tips

Looking back on the discussion as a whole, it seems clear that this topic was not only about techniques for revising text, but also about a broader question of how human beings can retain their role in an age of increasingly powerful tools. A scholarly text may be made clearer, smoother, or more concise with the help of AI. Yet in order to become a trustworthy piece of work, it still requires things that tools find difficult to replace: care with data, restraint in claims, the capacity for self-critique, and a sense of responsibility on the part of the writer.

Perhaps, then, rather than asking how to make a text “look less like AI,” the more fitting question is how to ensure that, even when AI is used, the piece still remains one’s own. One’s own in the framing of the issue. One’s own in the selection of evidence. One’s own in tone, in caution, and in the final decision about what may be said and what should not yet be stated too strongly.

If there is one thing to take away from Coffee Talk #4, perhaps it is not any single technical trick, but rather an attitude toward working with text: tools may be used where helpful, but the work of thinking should not be handed over to them entirely; support may be sought at the level of expression, but verification, revision, and responsibility must still remain with the writer.